What a Machine Learning Researcher Taught Me About ASO

How I adapted Andrej Karpathy's autonomous AI research pattern into a metadata optimization system that runs 25 experiments while I sleep. The secret: encoding your methodology into a scorer, not a prompt.

Table of contents

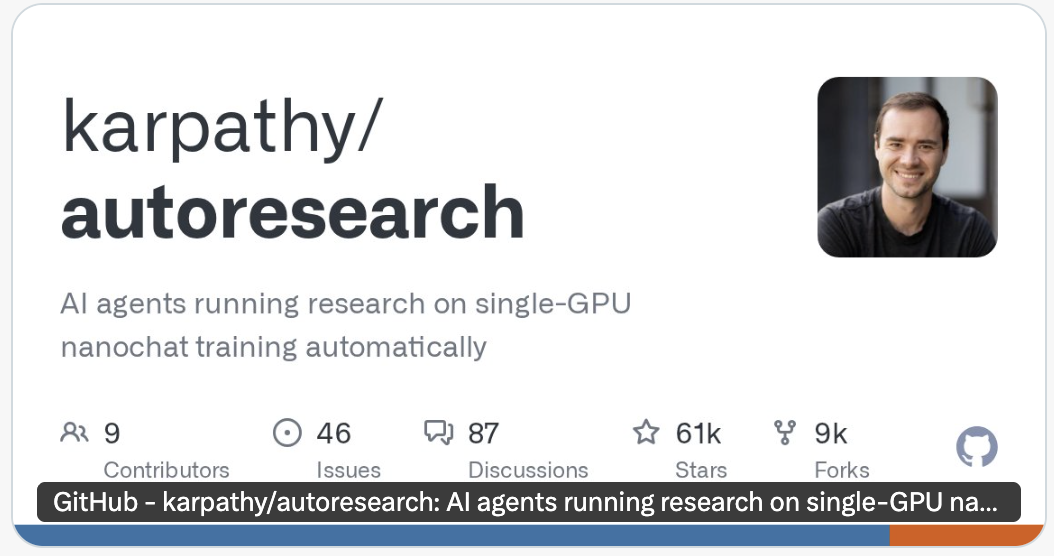

A few weeks ago, I came across a GitHub repository from Andrej Karpathy called autoresearch. If you don’t recognize the name, Karpathy is one of the most respected AI researchers in the world. Former head of AI at Tesla, founding member of OpenAI. The repo had 61,000 stars, which in developer terms means a lot of people found it valuable.

The idea was simple. Give an AI agent a machine learning model, a scoring function, and a set of instructions. The agent edits the model, trains it for five minutes, checks if the score improved. If it did, keep the change. If it didn’t, discard it and try something else. Repeat this loop overnight. Wake up to 100 completed experiments and a significantly better model.

I read through the repo and something clicked. This is exactly what metadata optimization is.

The Pattern Transfer

Think about what Karpathy’s system actually does. You have a constrained space (a model architecture). You have a quantitative measure of quality (validation loss). You have an agent that makes one change at a time, measures the impact, and keeps only what works. The human’s job is to define what “good” looks like and encode the strategy into instructions. The agent’s job is to grind through experiments faster than any human could.

Now replace the ML terms with ASO terms.

The constrained space is your metadata. 30 characters for a title, 30 for a subtitle, 100 for the keyword field. On Google Play, it is 4,000 characters of long description. The quantitative measure is whether your metadata covers the right keywords with the right weighting and placement. The experiment loop is: edit the metadata, score it, keep or discard.

The pattern is identical. The domain is different.

What I Had Before (And Why It Wasn’t Enough)

I had been using AI for metadata optimization for a while. I even built a dedicated skill that contained my full ASO methodology, including my 3D Keyword Framework for categorizing and prioritizing keywords. And the results were inconsistent. Sometimes I would get output that was genuinely good. Other times, the AI would put words like “amazing” or “cool” in the title. Words that carry zero ranking weight and waste characters in the most valuable real estate your app has.

The deeper problem was not the methodology itself. It was that every session, I found myself re-explaining the same things. You can’t just look at search volume. You need to weigh difficulty, relevancy tier, and keyword cluster membership together. The categorizations in the 3D Keyword Framework exist for a reason: they encode the logic of which keywords actually matter and why. But having definitions is not the same as having a scoring system. The AI understood the labels. It did not understand the math behind the decisions.

I could describe what good metadata looks like. But I had no way to make the AI verify its own work against that standard.

That was the gap. And Karpathy’s pattern filled it.

What I Built

I built two tools following the exact same architecture. One for iOS metadata (title, subtitle, keyword field). One for Google Play long descriptions.

Each tool has three files.

program.md contains the full optimization methodology. The rules, the priorities, the strategies. What to fix first. What trade-offs to make. When to stop. This is where the domain expertise lives.

score.py is a scoring function that takes a metadata file and a keyword list and returns a composite score out of 100. It breaks down into components: keyword coverage, placement accuracy, character efficiency, keyword density, duplication detection, and more. Every aspect of “what good metadata looks like” is quantified with specific weights.

The metadata file is the only thing the AI is allowed to edit.

There is no orchestrator script. No automation wrapper. The AI agent reads the program, runs the scorer, makes a change, scores again, and decides to keep or discard. The agent is the loop. It commits improvements, resets failures, logs every experiment to a results file, and keeps going until it plateaus or hits the experiment limit.

The critical design decision: the agent cannot edit the scorer. It can only edit the metadata. This forces the AI to optimize the actual work product, not game the evaluation. The same principle Karpathy applied to ML training.

Why the Scorer Changes Everything

Here is what most people get wrong about using AI for optimization work. They give the AI a pile of inputs and a description of what “good” looks like, and expect quality output. But “good” described in words is ambiguous. “Good” expressed as a number is not.

Take a concrete example. If you hand AI 500 keywords and say “optimize my iOS metadata,” the result will be mediocre. Not because the keywords are bad. Not because the AI is incapable. Because you gave it no decision criteria. No way to differentiate between a NorthStar keyword that must appear in the title and a low-relevance keyword that belongs in the keyword field (if it belongs anywhere at all). No way to evaluate whether swapping one word for another actually improved coverage or just moved laterally. Whatever the AI assumes about those 500 keywords, you get that assumption as your result.

The scorer eliminates assumptions. Every keyword has a relevancy tier, a volume weight, and placement rules. Every word in the title is evaluated for whether it contributes to tracked keyword combinations. Dead weight is penalized. Character waste is penalized. Missing NorthStar defense is penalized. The AI does not need to guess what matters. The score tells it.

And because the loop runs autonomously, the AI makes 15 to 25 targeted experiments per session. Each one is a single change, scored against the previous best, kept or discarded on merit. That volume of systematic experimentation is something no human would do manually. We would get to “good enough” after three or four rounds and move on. The agent keeps going.

The Results

I have run this on multiple apps across iOS and Google Play. The details stay confidential, but the pattern is consistent. Metadata that started with significant gaps in keyword coverage, wasted characters, and misplaced high-value keywords ended up dramatically tighter. Not just marginally better. The difference between the skill-based approach and the scorer-based approach was night and day.

More importantly, the quality floor changed. With the skill-only approach, I could get a great result or a mediocre one depending on the session. With the scorer in the loop, the floor is high. Every run produces output that meets a quantitative standard, because the AI cannot commit a change that makes the score worse.

The Takeaway

Most mid-to-senior growth practitioners know what good looks like. We carry frameworks, heuristics, and years of pattern recognition that tell us when metadata is strong and when it is not. The challenge with AI is not that it lacks intelligence. It is that we have not learned to translate our expertise into systems that AI can execute against with precision.

Karpathy’s contribution was not the agent or the code. It was the pattern: encode your methodology into a scorer, give the AI a clear loop, and let it run. That pattern transfers to any optimization problem where you can define “better” quantitatively. Metadata. Long descriptions. Screenshot copy. Possibly even creative testing, if you can define the scoring function.

The system and the knowledge are everything. AI is just a new way to process them.

Written by Kevser Imirogullari

Independent mobile marketing consultant helping apps by connecting acquisition, store, and monetization insights they missed.

Explore free tools →Get more insights like this

Join 500+ app marketers getting weekly tips on ASO, Apple Search Ads, and mobile growth.

No spam. Unsubscribe anytime.

You might also like

The ASO-ASA Synergy Framework: How Paid and Organic Compound

ASO and Apple Search Ads aren't separate channels. They're a feedback loop. Learn the 4-stage framework for coordinating paid and organic App Store growth.

App Store OptimizationASO Best Practices 2026: The Complete App Store Optimization Guide

ASO best practices for 2026 from 10 years optimizing 60+ apps. Keyword strategy, metadata limits, conversion tactics, and ASO-ASA integration.

Newsletter

Weekly mobile growth insights

What I'm seeing inside real app growth work, before it becomes common advice.

Subscribeor get in touch